What is Agent Observability?

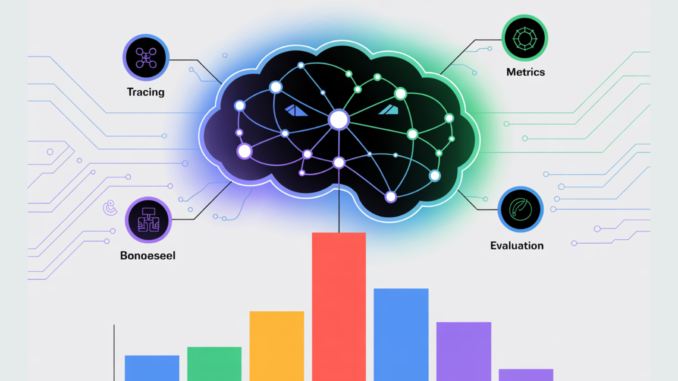

Agent observability is the discipline of instrumenting, tracing, evaluating, and monitoring AI agents across their full lifecycle—from planning and tool calls to memory writes and final outputs—so teams can debug failures, quantify quality and safety, control latency and cost, and meet governance requirements. In practice, it blends classic telemetry (traces, metrics, logs) with LLM-specific signals (token usage, tool success, hallucination rate, guardrail events) using emerging standards such as OpenTelemetry (OTel) GenAI semantic conventions for LLM and agent spans.

Why it’s hard: agents are non-deterministic, multi-step, and externally dependent (search, databases, APIs). Reliable systems need standardized tracing, continuous evals, and governed logging to be production-safe. Modern stacks (Arize Phoenix, LangSmith, Langfuse, OpenLLMetry) build on OTel to provide end-to-end traces, evals, and dashboards.

Top 7 best practices for reliable AI

Best practice 1: Adopt open telemetry standards for agents

Instrument agents with OpenTelemetry OTel GenAI conventions so every step is a span: planner → tool call(s) → memory read/write → output. Use agent spans (for planner/decision nodes) and LLM spans (for model calls), and emit GenAI metrics (latency, token counts, error types). This keeps data portable across backends.

Implementation tips

Assign stable span/trace IDs across retries and branches.

Record model/version, prompt hash, temperature, tool name, context length, and cache hit as attributes.

If you proxy vendors, keep normalized attributes per OTel so you can compare models.

Best practice 2: Trace end-to-end and enable one-click replay

Make every production run reproducible. Store input artifacts, tool I/O, prompt/guardrail configs, and model/router decisions in the trace; enable replay to step through failures. Tools like LangSmith, Arize Phoenix, Langfuse, and OpenLLMetry provide step-level traces for agents and integrate with OTel backends.

Track at minimum: request ID, user/session (pseudonymous), parent span, tool result summaries, token usage, latency breakdown by step.

Best practice 3: Run continuous evaluations (offline & online)

Create scenario suites that reflect real workflows and edge cases; run them at PR time and on canaries. Combine heuristics (exact match, BLEU, groundedness checks) with LLM-as-judge (calibrated) and task-specific scoring. Stream online feedback (thumbs up/down, corrections) back into datasets. Recent guidance emphasizes continuous evals in both dev and prod rather than one-off benchmarks.

Useful frameworks: TruLens, DeepEval, MLflow LLM Evaluate; observability platforms embed evals alongside traces so you can diff across model/prompt versions.

Best practice 4: Define reliability SLOs and alert on AI-specific signals

Go beyond “four golden signals.” Establish SLOs for answer quality, tool-call success rate, hallucination/guardrail-violation rate, retry rate, time-to-first-token, end-to-end latency, cost per task, and cache hit rate; emit them as OTel GenAI metrics. Alert on SLO burn and annotate incidents with offending traces for rapid triage.

Best practice 5: Enforce guardrails and log policy events (without storing secrets or free-form rationales)

Validate structured outputs (JSON Schemas), apply toxicity/safety checks, detect prompt injection, and enforce tool allow-lists with least privilege. Log which guardrail fired and what mitigation occurred (block, rewrite, downgrade) as events; do not persist secrets or verbatim chain-of-thought. Guardrails frameworks and vendor cookbooks show patterns for real-time validation.

Best practice 6: Control cost and latency with routing & budgeting telemetry

Instrument per-request tokens, vendor/API costs, rate-limit/backoff events, cache hits, and router decisions. Gate expensive paths behind budgets and SLO-aware routers; platforms like Helicone expose cost/latency analytics and model routing that plug into your traces.

Best practice 7: Align with governance standards (NIST AI RMF, ISO/IEC 42001)

Post-deployment monitoring, incident response, human feedback capture, and change-management are explicitly required in leading governance frameworks. Map your observability and eval pipelines to NIST AI RMF MANAGE-4.1 and to ISO/IEC 42001 lifecycle monitoring requirements. This reduces audit friction and clarifies operational roles.

Conclusion

In conclusion, agent observability provides the foundation for making AI systems trustworthy, reliable, and production-ready. By adopting open telemetry standards, tracing agent behavior end-to-end, embedding continuous evaluations, enforcing guardrails, and aligning with governance frameworks, dev teams can transform opaque agent workflows into transparent, measurable, and auditable processes. The seven best practices outlined here move beyond dashboards—they establish a systematic approach to monitoring and improving agents across quality, safety, cost, and compliance dimensions. Ultimately, strong observability is not just a technical safeguard but a prerequisite for scaling AI agents into real-world, business-critical applications.

Michal Sutter is a data science professional with a Master of Science in Data Science from the University of Padova. With a solid foundation in statistical analysis, machine learning, and data engineering, Michal excels at transforming complex datasets into actionable insights.

Be the first to comment